As the iPhone 12 Pro video says, this model is the photographer’s iPhone. Apple’s keynote announced a dizzying array of its new camera technology and I want to dig in.

iPhone 12 Sensor Shift

First, let’s talk about the sensor. Optical Image Stabilization (OIS) for Apple’s smartphones first appeared in the dual lens of the iPhone 7 Plus. It means that when you’re taking a photo or video, the lens moves around to compensate for shaky hands, giving you less blurry photos and smoother videos.

Sensor Shift is different because the lighter image sensor is the moving part instead of the heavier lens. This technology was pioneered in DSLR cameras with In Body Image Stabilization (IBIS). Powered by the A14 chip, when the iPhone 12 detects shaking it calculates the direction and speed in real time to move the sensor five thousand times per second.

In a DSLR camera, the advantage of this technology over OIS is that it works with any lens attachment since the lens doesn’t need to move. Presumably, the same will be true of the iPhone 12 and lens accessories like Moment and Olloclip. iPhone 12 owners should still be able to enjoy image stabilization with these products, and I look forward to testing this.

According to Apple’s press release, the image sensor in the iPhone 12 Pro Max is 47% larger than previous models. The size of the image sensor is crucial in cameras. Forget Samsung with its 108-megapixel camera. Sensor size is more important because bigger sensors combined with faster apertures are able to capture more light, and light is what photography is all about.

More light means brighter photos with less noise and improved dynamic range. The iPhone 6s introduced the 12MP camera in 2015, and although the pixels have gotten larger and the camera has improved in other ways, the number of pixels hasn’t needed to change.

Apple ProRAW

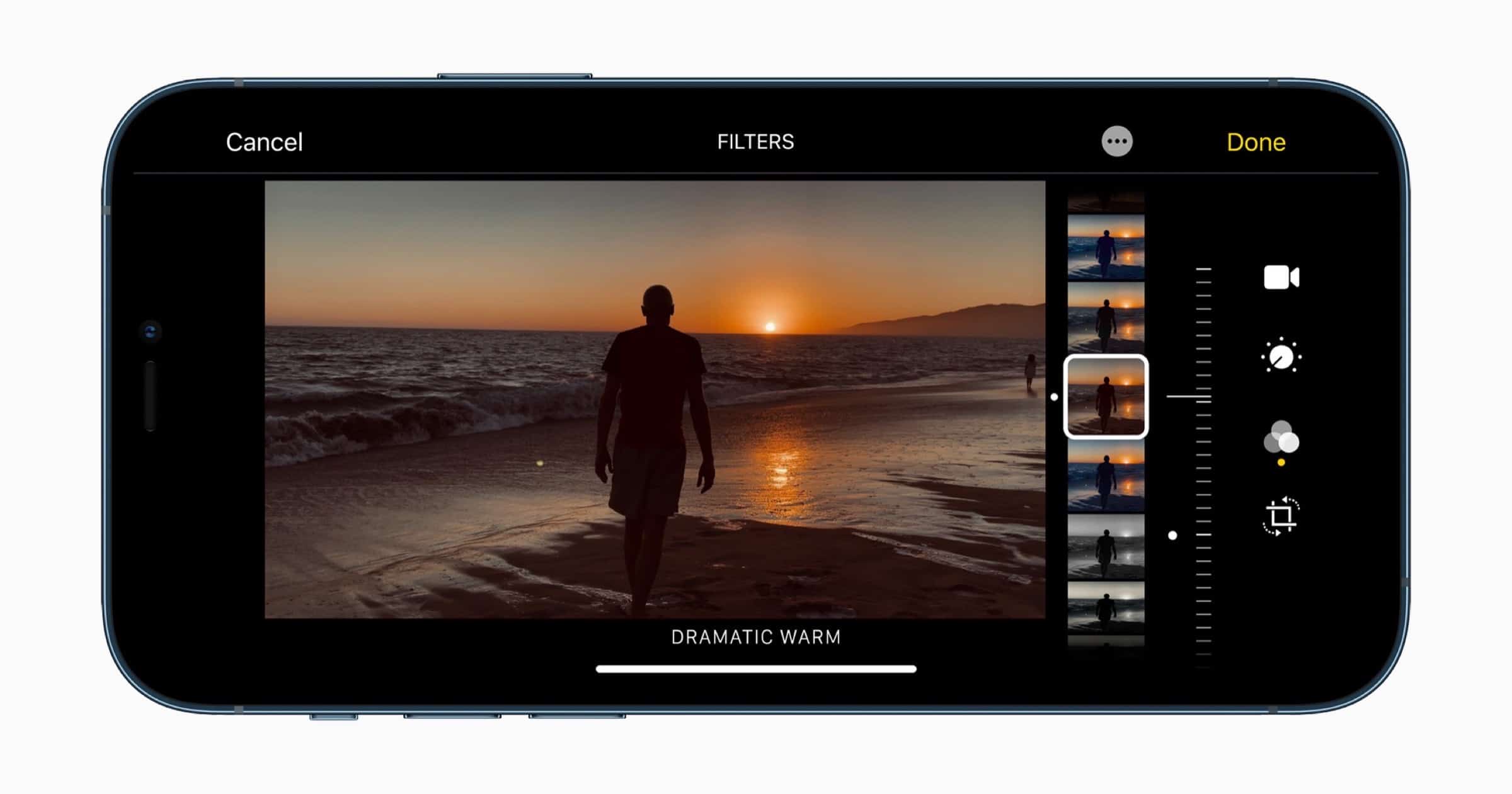

Another feature that Apple announced is the introduction of Apple ProRAW. This is a feature that will arrive in a future iOS 14 update for the iPhone 12 Pro and iPhone 12 Pro Max. Alok Deshpande, Senior Manager of Apple camera software engineering, mentioned that ProRAW uses Deep Fusion and Smart HDR data to differentiate from other RAW formats.

A RAW photo is an image file that contains all of the data that the camera captures. When you take a photo with an iPhone or camera it’s saved in a format like JPG, or in the case of iPhones, an alternative HEIC option. These are compressed file formats that discard some image data to save space. This is good is certain cases like web images because it means the file size is smaller.

RAW files are uncompressed and no data is discarded; they’re bigger because they contain all of the data that the sensor captures. You can edit RAW files to a higher degree than other formats while still maintaining image quality. Decreasing highlights, increasing shadows, adjusting the white balance, and color information are typical of RAW editing.

Importantly, if you save the original RAW file you can go back and re-edit the photo in a different way at the same quality. You can go from uncompressed (RAW) to compressed (JPG) but you can’t move in the other direction. Keep editing a JPG photo and it will slowly degrade in quality with each re-save.

The A14 chip processes photos with the CPU, GPU, and Neural Engine to capture as much image data as possible. For the first time Apple has been able to achieve this with all four Pro cameras (three rear + front). This will be available in the default camera app, editing in the Photos app, and by releasing an API it means third-party photography apps can also take advantage of Apple ProRAW.

LiDAR

We first saw a LiDAR sensor arrive with the iPad Pro earlier in 2020. This technology is a way to measure distance by bathing the target in laser light and measuring how long it takes for the light to bounce back. It’s also known as a Time of Flight sensor. LiDAR enhances the iPhone 12’s capabilities for augmented reality because it lets the device sense depth more accurately.

Besides AR, the iPhone 12 uses LiDAR for accurate autofocus, especially when taking photos or videos in low-light conditions. Apple says it improves autofocus in low-light up to six times faster than previous models.

Dolby Vision Video

iPhone 12 Pro and Pro Max can shoot HDR video in Dolby Vision up to 60 frames-per-second. Dolby Vision is a brand name for HDR 4K video, and as John Martellaro explains it supports higher peak brightness, 12-bit color, and uses the full BT. 2020 color space.

For the iPhone 12 customer this means brighter brights, darker darks, sharper contrasts, and vivid colors. Apple suggested in its keynote that the iPhone 12 could be part of a Hollywood director’s toolkit. Of course, you’ll also need a TV or other device that supports Dolby Vision to view these videos as the camera intended it.

Conclusion

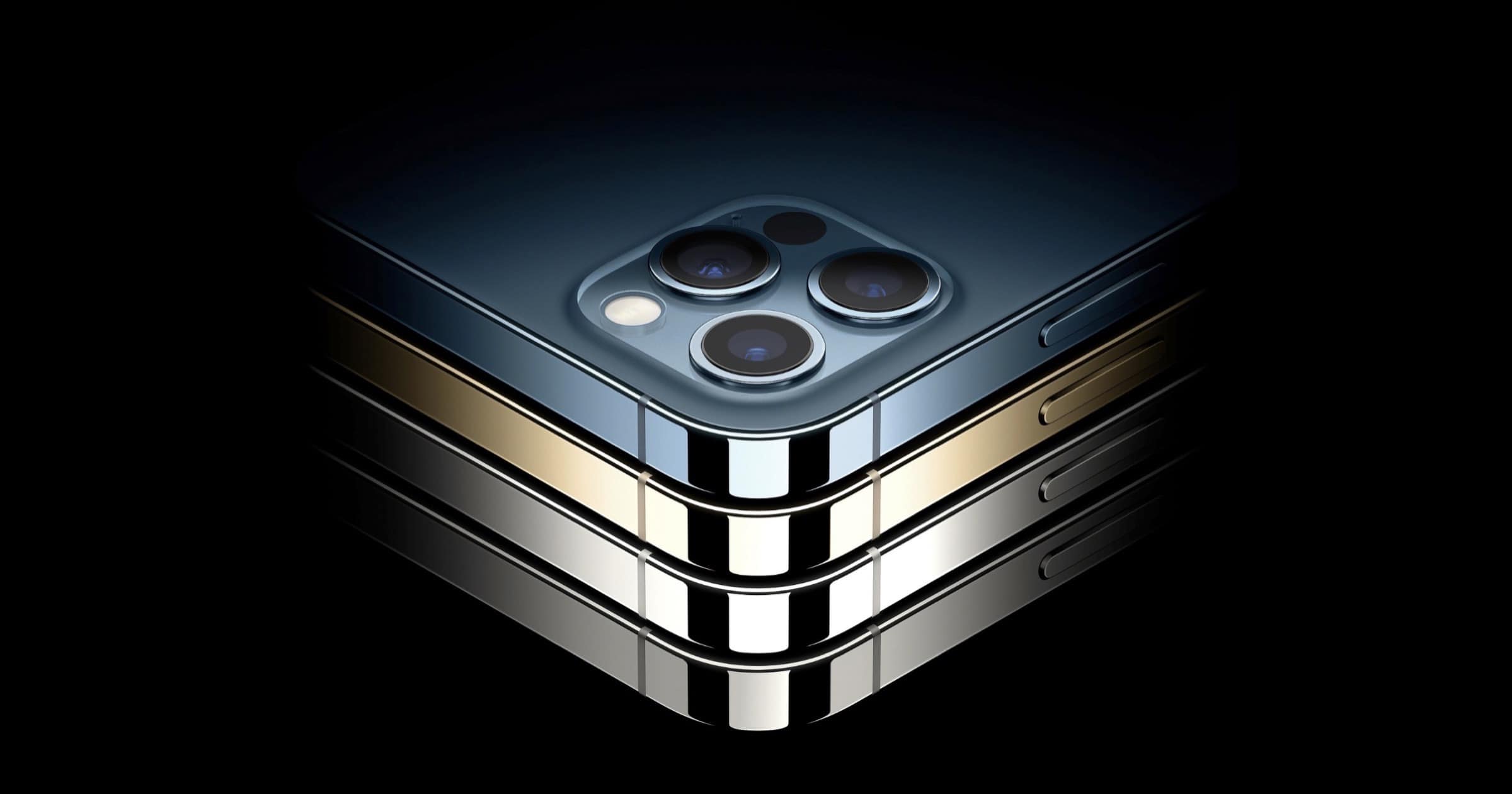

Overall, I was impressed by the similarities among the iPhone 12 models. Some may see that as a negative but it means that Apple is using fewer features to upsell. The iPhone 12 mini has the same screen as the iPhone 12 Pro Max, and the two Pro models share 99% of camera features.

With the iPhone 12 Pro Max you get an ƒ/2.2 aperture in the Telephoto lens, 2.5x optical zoom in, 5x optical zoom range, and digital zoom up to 12x. When shooting video you’ll get 2.5x optical zoom in and digital zoom up to 7x. And of course you’ll get a bigger screen and battery.

The iPhone 12 Pro gives you an ƒ/2.0 aperture for the Telephoto, 2x optical zoom in, 4x optical zoom range, and digital zoom up to 10x. In video recording you’ll get 2x optical zoom in and digital zoom up to 6x. Otherwise, the aperture for the Wide and Ultra Wide lenses are the same for both models.

The lines have blurred in all other areas.

I had convinced myself that I would get the iPhone 12 or at most the 12 Pro. But with the sensor shift, and some other unique features of the Max made me decide on that. So rats, I have to wait another month! Not that my iPhone X doesn’t work fine, it does. I’m just ready for some things it lacks. And this phone does it for me like I never imagined. (Former professional photographer switching systems on retirement and not wanting to fork out for a 12-24 zoom lens just now.)