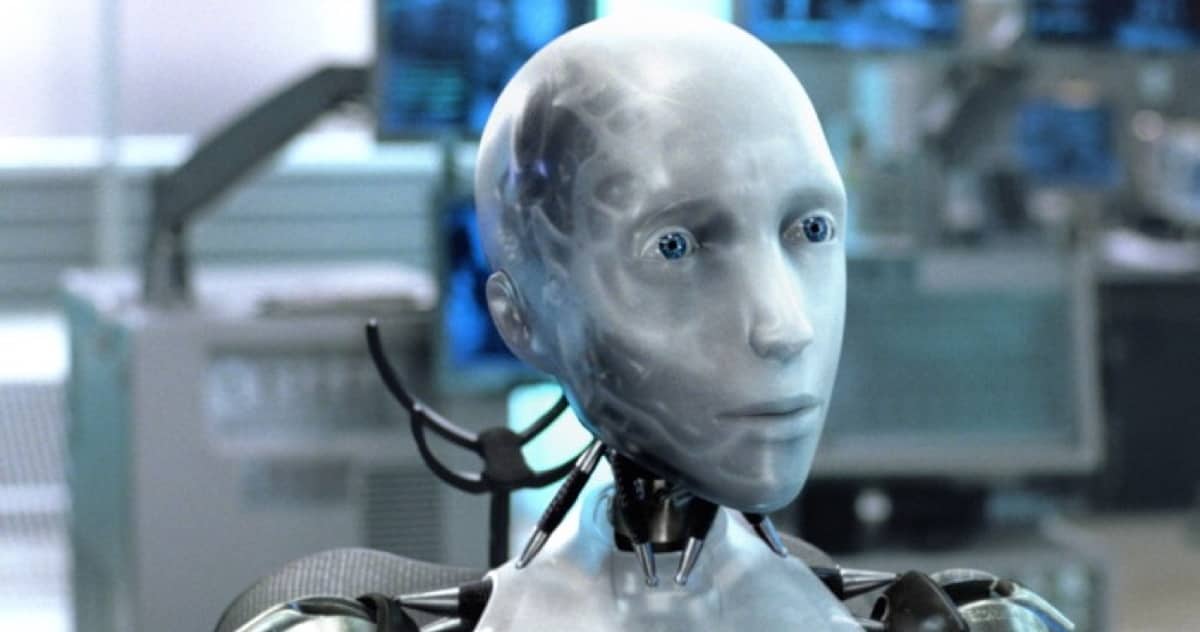

Should there be occasions when advanced AI’s, especially robots or androids, refuse a command by a human being? Asimov’s Three Laws of Robotics (mostly) dictate the rules, assuming the robot has been programmed with that in mind. However, there are nuances worth further discussion, and they depend some very sophisticated, nuanced thinking (and predictions) by the robot. It’s all on page 2 of Particle Debris.

Check It Out: When Should a Robot just Say “No!”