There, Apple, I fixed it for you

Apple’s CSAM privacy fiasco (see my Sunday article) continues with apparently contradictory “clarifications.” In trying to tell the public “there’s nothing to see here” Apple released two documents on its use of CSAM technology: an FAQ and a CSAM Technical Summary (PDF links).

The CSAM Technical Summary seems to say scanning takes place “on-device” while the FAQ seems to contradict that with a flat “No”.

First, Just the FAQs

Apple’s FAQ states:

August 2021 v1.1 (highlighting added)

The above makes it seem, to me, unequivocally that there there is “No” reading of photo files that happens on your iPhone, i.e., no on-device scanning. But the language seems lawyer weaselly in at least two spots:

“[is] Apple…going to scan all the photos stored on my iPhone?”

“this feature only applies to photos that the user chooses to upload to iCloud Photos”

If you read those statements hyper-literally, it does not answer the intended question broadly. To me, and I think to most reasonable people, a ‘private iPhone photo library’ means ‘anything on my iPhone’ whereas Apple seems to be hyper-technically defining ‘private iPhone photo library’ as ‘a library not selected for uploading to iCloud Photos’.

So, for example, if you have 2 photo albums on your iPhone (e.g., Album A and Album B) and you “choose” to upload only Album A to iCloud Photos, Apple will scan only Album A. So technically they are not scanning “all the photos stored on [your] phone”.

Technically True But Misleading

Nevertheless, that still means Apple can scan some of your files (i.e., all files from Album A) on your iPhone and the above FAQ statements can still be true. Worst still, many users don’t consciously choose anything. They basically have all their photos hit iCloud (e.g., via Photo Stream, monolithically turning on iCloud Photos for all their photos, etc.). As such, commonly, most users wont know Apple’s CSAM will scan most if not all photos on their phone, which, I think is contrary to the spirit of Apple’s above FAQ.

Why does that matter? The question of where Apple reads your files is super important. If Apple reads/scans photo files you chose to send to iCloud, and that only happens on Apple’s iCloud server, well, no big deal. That’s no longer on your device, and you chose to put it up on the cloud.

But if the scanning happens on your iPhone, then shame on Apple. Why? Because then Apple is not informing you nor getting your permission to do things to your device and data and its invading your privacy. Apple has then effectively created a potentially privacy destroying backdoor to your device and data.

That distinction may seem small and subtle, but it’s everything.

And Now the CSAM Technical Summary

Now lets compare the above FAQ with Apple’s CSAM Technical Summary:

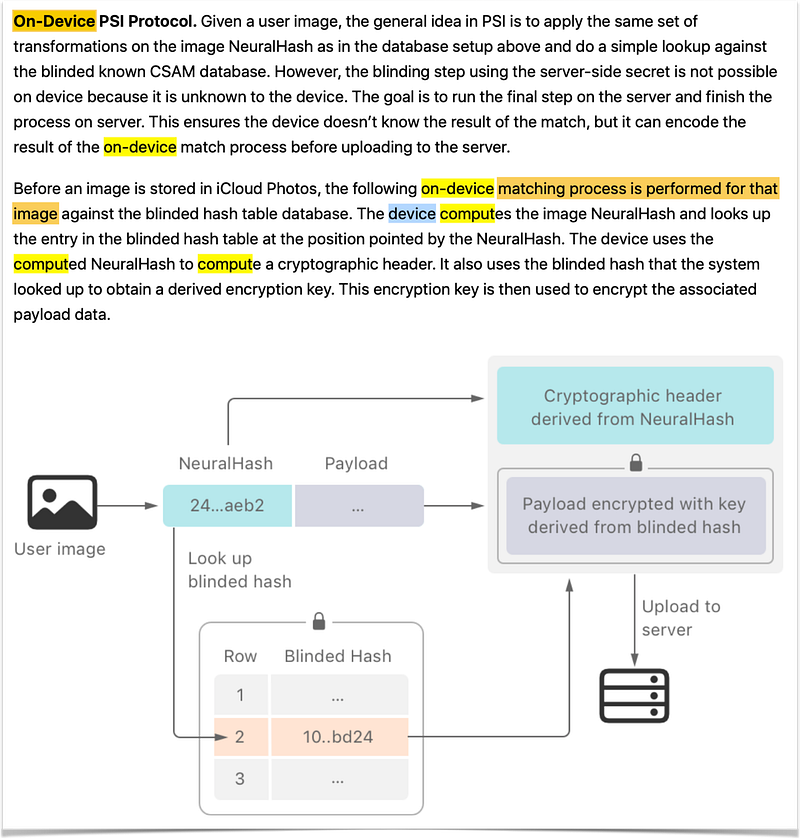

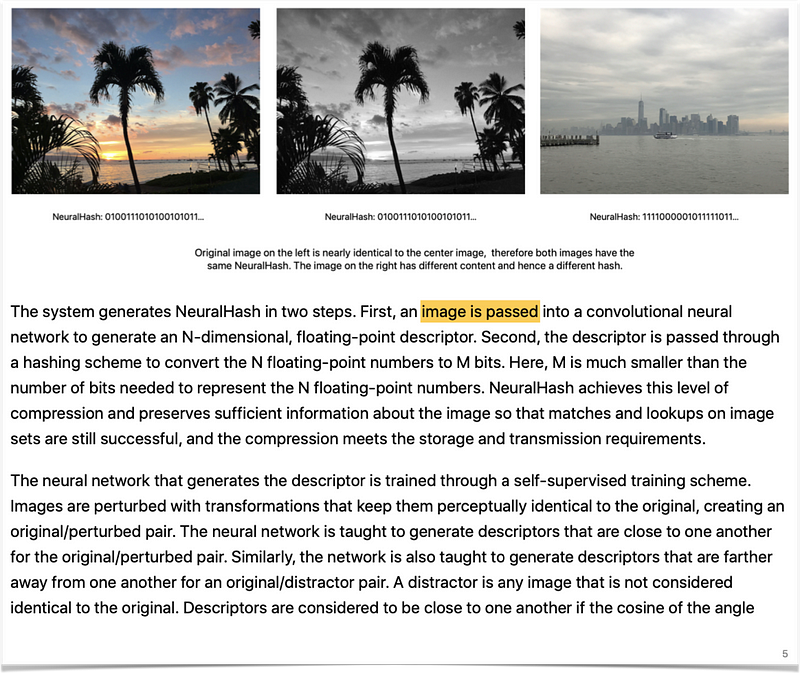

Apple’s CSAM Technical Summary makes it apparently clear that it operates “on-device” — on your device/iPhone. For the “comput[ation]” of the image NeuralHash, the on-device matching process accesses, processes and transforms data from your image files. And to process your image, it must read into your files. Although the language throughout these documents is consistently obfuscating that your files are being read on your device by deftly avoiding even a single instance of the word “read” throughout, page 5 of Apple’s CSAM Technical Summary does tell that’s what’s going on:

That the “image is passed” seems to be a euphemism for what Apple is doing, which is reading into your photo files on your device to create the NeuralHash. Bottom line, Apple’s CSAM implementation seems to have some process on-device that has access to your files and reads into them to create these hashes.

You’ve Lost That Loving Feeling

Apple pleads, you can trust it will not allow others to abuse such a backdoor process. But like I said earlier:

[C]ode is infinitely mutable…Even if you trust people at Apple to do the right thing today, the people there tomorrow may not have the same power, inclinations, or agenda. A simple change of management and a software patch update, and now the criteria and those pulling the strings are different.

Considering Apple’s FAQ, seemingly and perplexingly, is either, at best, deftly misleading, or, at worst, outright lying, I think it has exhausted all trust.

To all of you that are now proven wrong, enjoy the crow.

https://macdailynews.com/2023/08/31/apple-explains-its-csam-scanning-about-face-scanning-for-one-type-of-content-opens-the-door-for-bulk-surveillance/

https://9to5mac.com/2022/12/07/apple-confirms-that-it-has-stopped-plans-to-roll-out-csam-detection-system/

I was right.

All the Mac press will now pretend that they were always against this. That it was a bad idea. But when it counted to speak up they pretended to be ‘neutral’ at best, or outright cowered supporting this atrocity. Back then it took guts to stand against it, and like always, you could count on the coward tech/Mac press to lackey along and not speak up for the right thing.

I really like this article you shared. Thanks for taking the time to write it

I disagree with the idea that Apple can or should SEARCH/SCAN through ANY of MY DATA regardless of where it is stored.

So I disagree with your suggested premise that WHERE the data is stored changes the rights to privacy of that data. I’m NOT sharing my data with the general public.

If you simply want to share/transfer?access data between your own devices (phone, computer, etc), you have to use iCloud.

Just moving photos from iPhone to computer sometimes happens through iCloud.

Beyond PHOTOS:

CONTACTS, with all their notes, data, photos, are stored in iCloud.

NOTES, with all that data and photos, are stored in iCloud.

The fact that iCloud servers are owned by Apple and wires are owned by Internet companies doesn’t CHANGE THE OWNERSHIP of the data.

DATA is mine….and Apple/Govt should stay out of my data…..regardless of what might be in my data….and especially without a court order.

Just because Apple is “only” using this technology to go after “child porn and abuse” does not logically/legally validate their action.

You know I tend to agree with you but I think where does matter. Youre putting data on THEIR property on their server. What if you put malicious malware on their server that would destroy their server and all data on it. Don’t they have a right to protect their own property?

On the other hand, I can see your perspective in that it’s a bit like you renting space on their server, so you should be able to do what you like. But if you rent a house, that also does not give you free rights to burn that house down because ultimately, it’s not your house.

But I see your point of view and think it’s a perfectly reasonable point of view.

The greater point, on which I think we violently are agreeing past each other, on the devices WE OWN, yea, bzzzt, no one should do anything we did not authorize.

Excellet analysis, John. I’m still going through an internal debate about this situation. On the one hand, I truly believe we should maintain rights to privacy on our iPhones. On the other hand, I’m vehemently enraged by any abuse or exploitation of children.

I think that, at a minimum, Cupertino needs to publish an explanation that is both easily accessible to end users of all levels of education and in agreement with the technical documentation already released. This sort of controversial technology is not the right place for the volumes of technobabble we’ve been seeing from Apple.