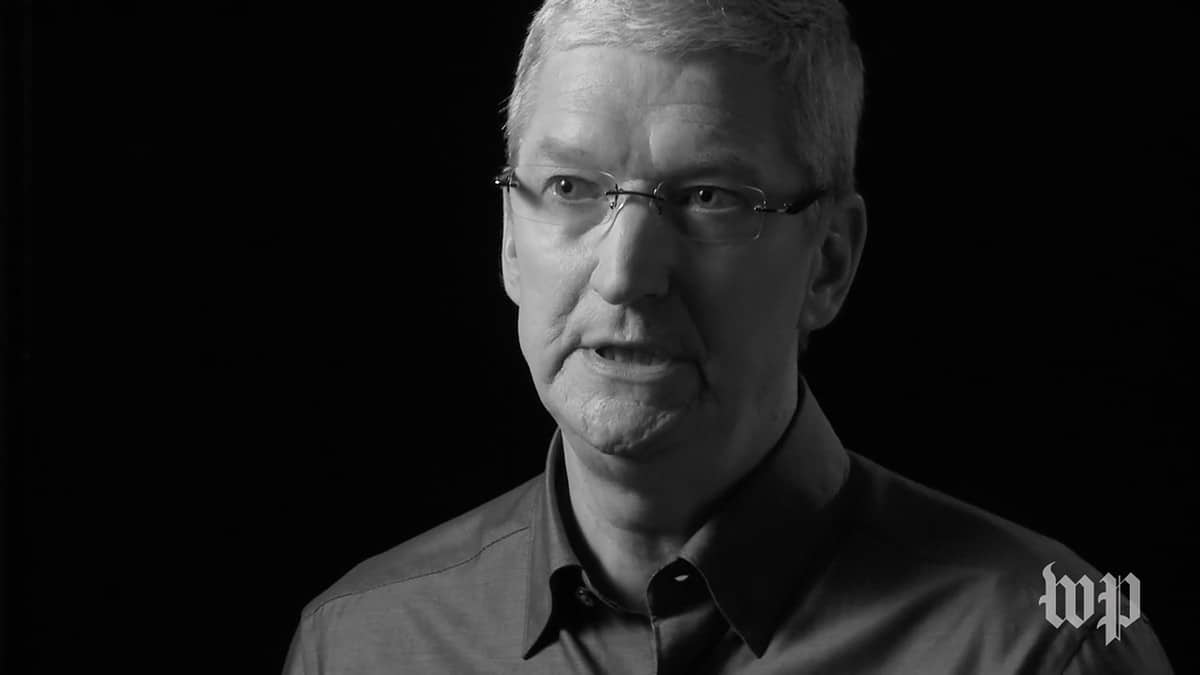

During Apple’s 2018 Q2 Earnings Report, Apple CEO Tim Cook said, “we believe privacy is a fundamental human right.” That’s a strong and inspiring stand.

Mr. Cook said this twice. First, in his opening preamble. And a second time in response to a question from Amit Daryanani (RBC Capital). The question was in the context of how Apple protects our privacy. Cook enumerated three processes that Apple uses.

- Encryption

- Keeping sensitive data on the device

- Collecting less data [than others]

In the context of being a “benefit,” Cook reiterated, it seems, that privacy is a right, not merely a benefit.

The U.S. Constitution

If memory serves well, this is the first time CEO Cook has made such an explicit statement about Apple’s view of privacy. That’s despite the fact that Apple has been acting on (and emphasizing) this belief for years. The question one might ask, now, is this merely a corporate opinion? Is it a legal positioning for future legal cases? And does it have some basis in the U.S. Constitution?

This short news item cannot delve into such weighty legal matters. However, one good place to start more discussion is to explore that last question. Is privacy in inalienable personal right in the U.S.? Globally? A quick search turned up a very readable analysis from the University of Missouri (Kansas City).

That’s an interesting, informative read. However, it’s more historical and doesn’t try to address modern issues with smartphones. More broadly, it’ll be interesting to see where Apple goes with this. Mr. Cook has had several meetings with the current U.S. President. Has he laid out this corporate stand?

Moreover, do Apple’s attorneys believe they can make a (modern) Constitutional case? (Perhaps something derived from the previous legal kerfuffle with the FBI?) Or is Cook simply laying the foundation for more explicit legislation that he hopes will be forthcoming? Legislation that would avert a future legal crisis when it comes to Apple’s use of encryption.

We don’t yet have a good picture of where Apple is going with this affirmation, but clearly Mr. Cook, clearly and forcefully, intended for all of us to have the issue more clearly on our minds going forward.

Fundamental Human Right? No way bub. The way I see it there are 2 “privacies” of which one is your personal privacy and the other is well – not much because it is ‘social’ privacy such as bank accounts (read *your* boa TOS lately??) and youtube facebook email or ANY other stuff you put out over the web which of course by your own will is NOT private. Even in the first case – who says you should have a right to privacy in a world made up of interdependent species? Seems pretty ridiculous there. Can anyone name a few world wide tragedies that were prevented or warned about because a veil of privacy was lifted?

Timmy’s co. has a lot of money – and a lot of flapping gums but what has Apple done except make a lot of money? Easy to throw stones when you aren’t even IN the game with Google, Amazon or even Tesla or Nvidia vis a vis AR, AI and such where “privacy” is a catch-22 i.e. “how can I help you if I don’t know you” type stuff. Draw the line….rrrrrright – I get software update notifications from Apple both in-app and from the App Store obnoxious red thing with a white number in it. How does Apple know what I have? Seems stupid but it’s the same invasion of privacy – I can’t turn off Apple’s ability to KNOW what I have on my entire system – even if I turn off MY end of notifications APPLE still “invades my privacy” and hides it in their TOS as well. Just an example – and like I said Apple hasn’t even gotten into the Alexa and AR/AI game – or EV car game or Game game or….. rant over. 🖖🎸 pro tools time……

@wab95 – I do agree with you that Apple has to engage with China rather than walk away. My problem with what Tim is doing is the blanket statement he is making. He needs to explicitly say, “We believe that privacy is a fundamental human right, but we also recognize that we do business in countries where the leadership does not feel the same way. We’re engaged with them, trying to change this…” – rather than leaving the impression with everyone that there’s no difference in how Apple treats my personal data and how they treat the data of someone living in China.

Old UNIX Guy

John:

More than a flag in the sand, Cook’s statement reads more like a beachhead, from which Apple plan not merely to defend but to extend user privacy, leading from the front. Yes, it will be interesting to see how this unfolds in the coming years, but this is likely going to be a protracted fight, with reversals and setbacks along the way; however I believe that Apple are on the right side of history on this issue, and that ultimately this position will prevail.

@Old UNIX Guy:

I’d caution against that interpretation. I live in Asia and travel the region extensively, and can assert firsthand that China is a complex environment that requires a nuanced and longterm approach. This is precisely the tack that the people there have taken, with substantial gains in rights and access to goods, services and yes, even information – however curated. In my living memory, this was a closed society. NB: this was the society after which North Korea patterned itself, and remains a living fossil of that period and practice.

Apple’s approach is almost quintessentially culturally and politically appropriate to the setting; being a good citizen, being seen as playing by the rules, and taking a longterm and patient approach to extending its influence without appearing to threaten either rulership or social stability. Those who have done otherwise get shut down, disappear or are expelled. Apple have taken Mao’s own approach to deal with the Communist Party, ‘No participation, no right to observation’. You’ve got to be present and engaged if you want to make change.

This is a long game of three dimensional chess.

John,

Talk is cheap and actions are what matter and Tim Cook’s actions don’t match his words here.

Hey Tim, what about the users of your products that happen to live in China???? You can say whatever you want, but by your actions you very clearly think – at a minimum – that maximizing Apple’s profits are more important than the privacy of your users in China. It’s time to put up or shut up on this issue, Tim…

Old UNIX Guy

Apple wouldn’t be in China if they didn’t work with the Commies running the Country. So yeah, in that instance they are selling out for money, but in the USA the situation is differnet, for the time being.