Texas Attorney General Ken Paxton filed a lawsuit against Meta. It seeks civil penalties due to Facebook’s facial recognition practices.

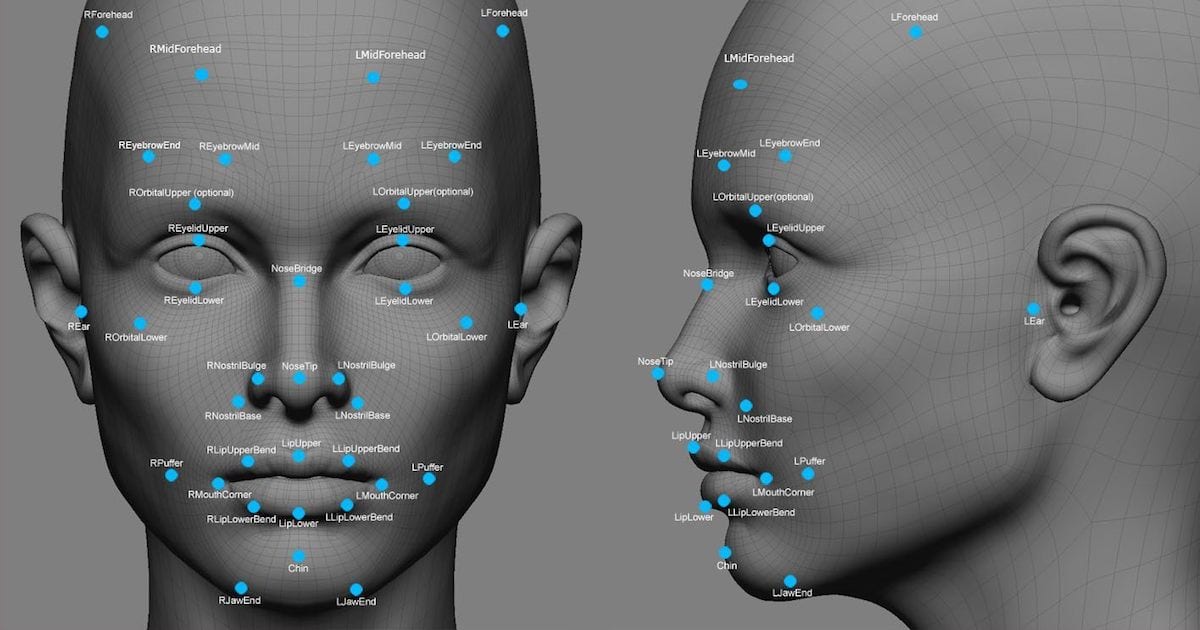

facial recognition

IRS Will No Longer Require Facial Recognition for Taxes

Backpedaling among public backlash, the IRS won’t require citizens to use facial recognition for taxes, The New York Times reported.

Treasury Considers ID.Me Alternatives Over Privacy Concerns

The Treasury Department is looking into ID.me alternatives for accessing the IRS website over privacy concerns.

CEO Blake Hall this week said that the company also used one-to-many technology, which compares selfies taken by users as part of the verification process against a larger database. The company said it maintained an internal database of selfies taken by users and compared new selfies against it using Amazon’s controversial Rekognition technology. As of January 25, 20.9 million users’ selfies had been verified against that database, the company said.

ID.me CEO Admits Company Uses '1:Many' Facial Recognition

ID.me CEO Blake Hall wrote in a LinkedIn post that his company uses 1:many facial recognition. Cyber Scoop explains how this contradicts a press release saying ID.me does not use this technology. 1:many means the technology can identify people within mass databases of photos. It’s the opposite of the 1:1 face match proposed in the IRS + ID.me verification.

“We could disable the 1:many face search, but then lose a valuable fraud fighting tool. Or we could change our public stance on using 1:many face search,” an engineer wrote in a message posted to a company Slack channel on Tuesday. “But it seems we can’t keep doing one thing and saying another as that’s bound to land us in hot water.”

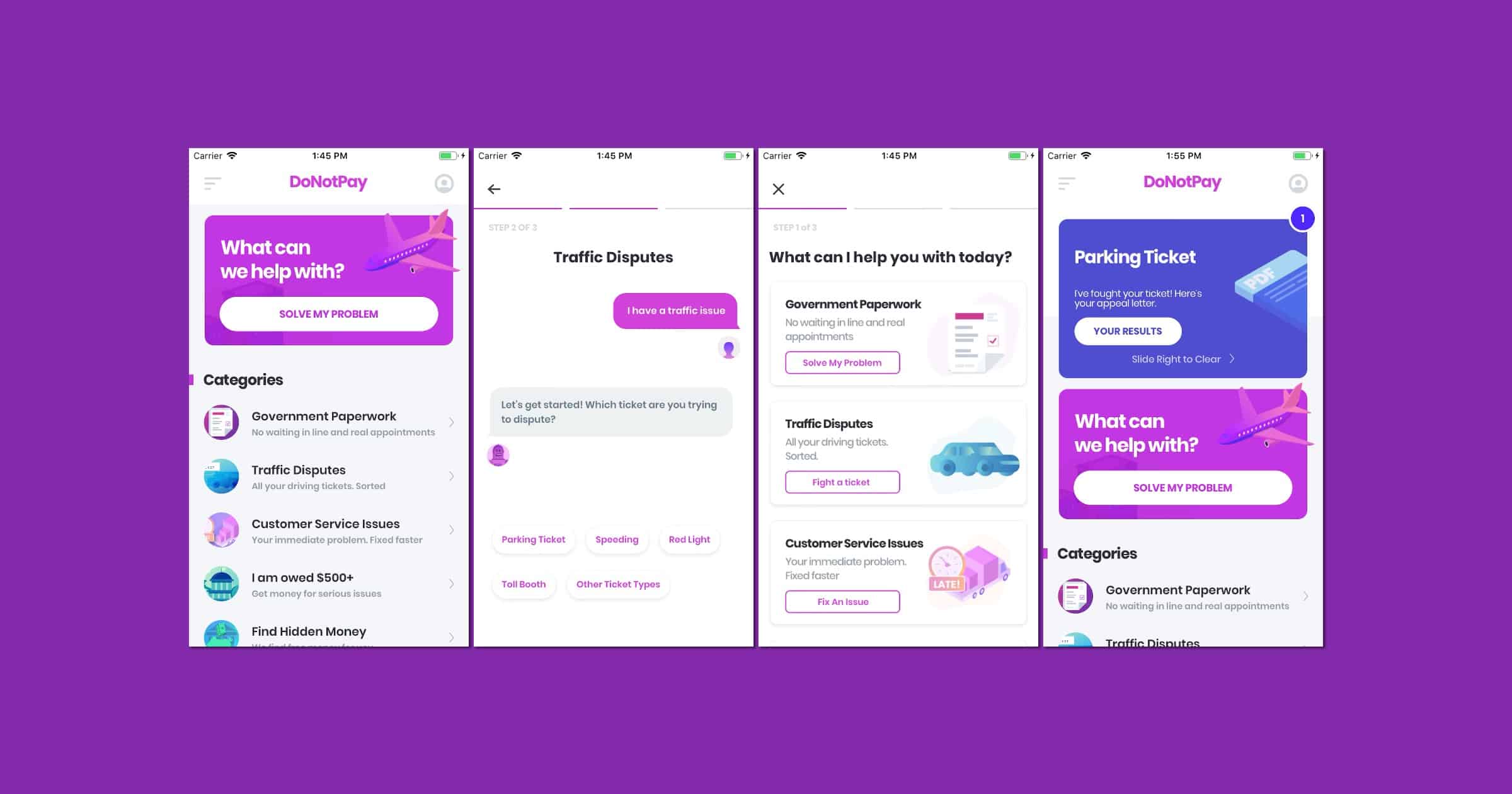

Photo Ninja by DoNotPay Can Confuse Facial Recognition Algorithms

DoNotPay can perform a variety of tasks for you, like cancelling subscriptions, appealing parking tickets, and dealing with copyright protection. It has a new service called Photo Ninja that can help block facial recognition.

Photo Ninja uses a novel series of steganography, detection perturbation, visible overlay, and several other AI-based enhancement processes to shield your images from reverse image searches without compromising the look of your photo.

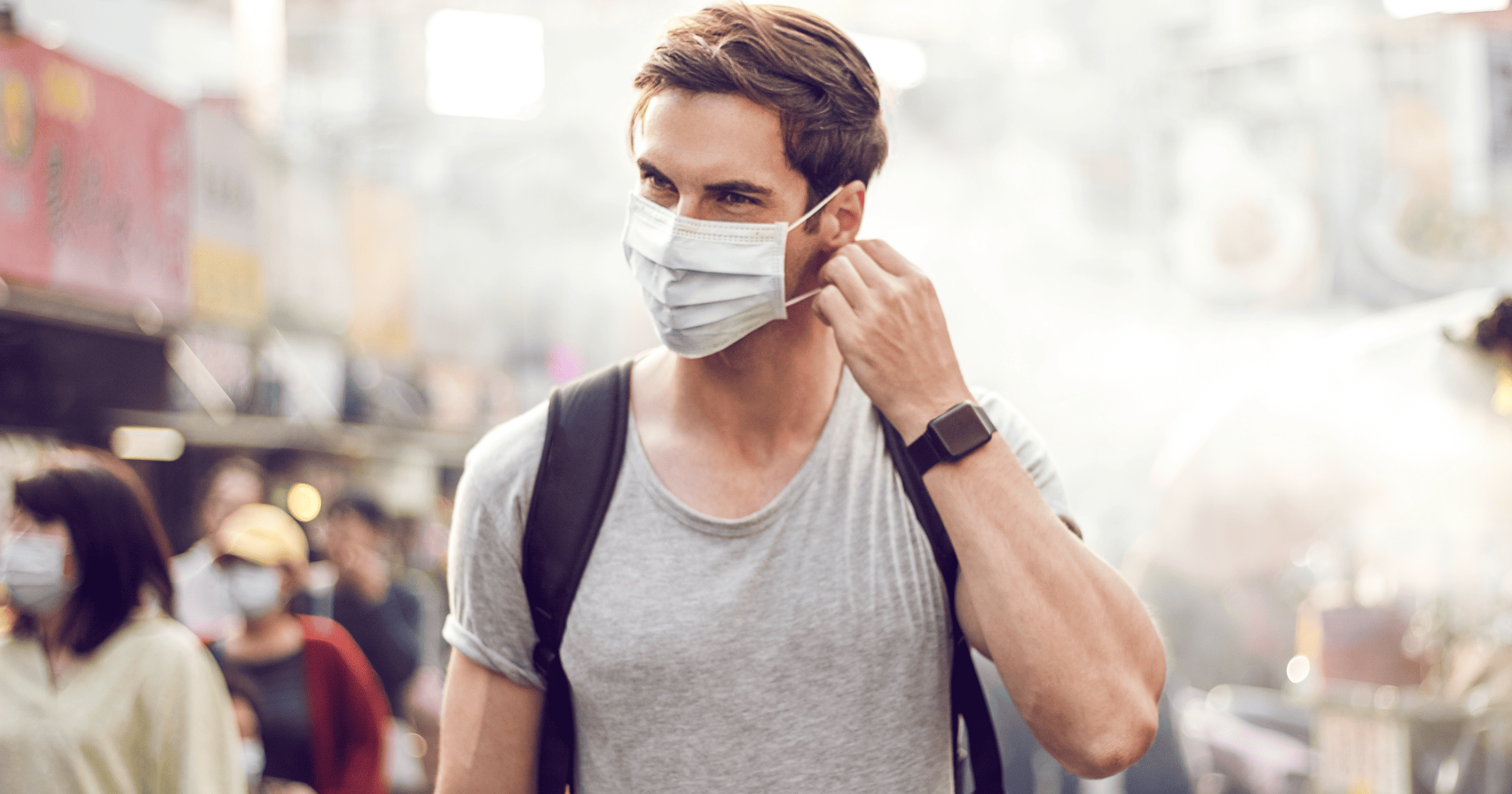

New Facial Recognition Tech Works Even When People Are Wearing Masks

Japan’s NEC has launched a facial recognition system that works even when people are wearing masks. Customers for the tool include Lufthansa and Swiss International Airlines, Shinya Takashima, assistant manager of the company’s digital platform division, told Reuters. (BBC News also reported that London’s Metropolitan Police uses the technology.)

The system determines when a person is wearing a mask and hones in on the parts that are not covered up, such as the eyes and surrounding areas, to verify the subject’s identity. Users register a photo of their face in advance. NEC says verification takes less than one second and claims an accuracy rate of more than 99.9%. The system can be used at security gates in office buildings and other facilities. NEC is also trialing the technology for automated payments at an unmanned convenience store in its Tokyo headquarters.

LAPD Bans Commercial Facial Recognition Technology

The Los Angeles police department has banned the use of commercial facial recognition like Clearview AI by its officers.

The LAPD, the third-largest police department in the United States, issued a moratorium on the use of third-party facial recognition software on Nov. 13, after it was told that documents seen by BuzzFeed News showed more than 25 LAPD employees had performed nearly 475 searches using Clearview AI as of earlier this year. Department officials have made conflicting statements in the past about their use of facial recognition technology, including claims that they deploy it sparingly.

Portland Facial Recognition Ban Strongest in Country

Portland’s facial recognition ban passed on Wednesday is the strongest law thus far in the United States.

Utah is Now a Surveillance State Thanks to This Company

A surveillance company called Banjo has partnered with Utah state authorities to enable a dystopian panopticon.

The lofty goal of Banjo’s system is to alert law enforcement of crimes as they happen. It claims it does this while somehow stripping all personal data from the system, allowing it to help cops without putting anyone’s privacy at risk. As with other algorithmic crime systems, there is little public oversight or information about how, exactly, the system determines what is worth alerting cops to.

Apple Blocks Clearview AI iPhone App

Apple has blocked Clearview AI’s iPhone app, saying it violated the terms of its enterprise program because the app wasn’t for internal use.

Someone Stole Clearview AI’s List of Clients

Clearview AI gained notoriety for partnering with law enforcement on facial recognition, using its database of billions of scraped images from the web. But someone just stole its list of clients.

…Clearview AI disclosed to its customers that an intruder “gained unauthorized access” to its list of customers, to the number of user accounts those customers had set up, and to the number of searches its customers have conducted. The notification said the company’s servers were not breached and that there was “no compromise of Clearview’s systems or network.”

Meanwhile, law enforcement on end-to-end encryption: “Who needs that kind of encryption, other than maybe the military? We don’t even — in law enforcement — use encryption like that.”

Clearview AI Helps Law Enforcement With Facial Recognition

In a long read from NYT, Kashmir Hill writes about a startup called Clearview AI that works with law enforcement on facial recognition.

You take a picture of a person, upload it and get to see public photos of that person, along with links to where those photos appeared. The system — whose backbone is a database of more than three billion images that Clearview claims to have scraped from Facebook, YouTube, Venmo and millions of other websites — goes far beyond anything ever constructed by the United States government or Silicon Valley giants.

Students Want to Ban College Facial Recogntion

Students for Sensible Drug Policy and Fight for the Future are teaming up to ban college facial recognition from campuses.

Facial recognition surveillance spreading to college campuses would put students, faculty, and community members at risk…Schools that are already using this technology are conducting unethical experiments on their students. Students and staff have a right to know if their administrations are planning to implement biometric surveillance on campus. Grassroots organizing stopped facial recognition from ruining music festivals. Now we’re going to stop it from invading university campuses.

Homeland Security Cancels Facial Recognition Plan for Americans

Homeland Security had a plan to expand its use of airport facial recognition to include U.S. citizens. After much outcry the agency will drop that plan, although foreign nationals and visitors will still face mandatory scanning.

A spokesperson for Customs and Border Protection, which filed the proposal, said the agency has “no current plans to require U.S. citizens to provide photographs upon entry and exit from the United States,” and that it “intends to have the planned regulatory action regarding U.S. citizens removed from the unified agenda next time it is published.”

Like an Addict Facebook is Chasing Even More of Our Data, Now With Facial Scans

Researcher Jane Manchun Wong found that Facebook is working on facial scans called “facial recognition-based identity verification.” It would ask users to upload a selfie of them looking in different directions before they can access their account.

On that same screen and later in the actual video selfie process, Facebook notes that “no one else will see” the video selfie you submit to them and says the video will be “deleted 30 days after your identity is confirmed.”

Deleted after 30 days. Based on Facebook’s past actions we can safely assume it will do the exact opposite. There’s not much room for giving them the benefit of the doubt.

ACLU Sues FBI Over Facial Recognition

The ACLU is suing the FBI over its use of secret facial recognition technology. The agency as a database of roughly 640 million faces.

Australia, Please Don't Scan My Face When I Download Porn

The U.K. recently canceled its plans for an age filter on porn websites, but now Australia has taken up the mantle. It wants internet users to verify their identity using facial recognition before viewing pornography.

Writing in a submission to the House of Representatives Standing Committee on Social Policy and Legal Affairs’ inquiry, launched in September, Home Affairs said it could provide a “suite of identity-matching services”.

One example highlighted by the department was the use of the Face Verification Service to prevent a child using their parent’s driver licence to get around any age verification.

At this point, me writing about porn is a running joke now. But stuff like this raises awareness on important privacy issues.

Your Kids' Photos Power Surveillance Technology

The New York Times has a nice feature out today about how a mother found photos of her kids in a machine learning database.

None of them could have foreseen that 14 years later, those images would reside in an unprecedentedly huge facial-recognition database called MegaFace. Containing the likenesses of nearly 700,000 individuals, it has been downloaded by dozens of companies to train a new generation of face-identification algorithms, used to track protesters, surveil terrorists, spot problem gamblers and spy on the public at large. The average age of the people in the database, its creators have said, is 16.

I can’t imagine the gross feeling you get when you see your kids in a database like this.

Apple Headset Patent, Google Buying Facial Data – TMO Daily Observations 2019-07-24

Bryan Chaffin and Andrew Orr join host Kelly Guimont for a discussion of an Apple “headset” patent and Google’s offer to buy facial data.

Your Facial Data is Worth a $5 Gift Card to Google

Google employees are stopping people in public and offering them a US$5 gift card in exchange for their facial data. The company is thought to be working on a Face ID authentication system for the Pixel 4.

“I assume they’ll use the data to train a neural network to be able to recognize what a face is,” he replied. “Then you train your own phone on what your specific face looks like. And that’s what gets used to unlock your phone, Face ID-style, but more accurately.”

Add three zeroes to that Google, and then I’ll discuss it.

Microsoft Does Something Unexpected About Privacy

According to engadget, “Microsoft discreetly wiped its massive facial recognition database.”

Microsoft has been vocal about its desire to properly regulate facial recognition technology. The company’s president, Brad Smith, appealed directly to Congress last year to take steps to manage the tech, which he says has “broad societal ramifications and potential for abuse.” Such are the company’s concerns that it even blocked the sales of the tech to California police forces. Now, Microsoft is continuing its crusade by quietly deleting its MS Celeb database, which contains more than 10 million images of some 100,000 people.

These days, it seems everything in tech privacy matters gets continuously worse. Deleting big data sets is hard to do. Good work, Microsoft.

IBM Sells Technology to a Dictatorship...Again

IBM is no stranger to selling stuff to dictators. First it was the Nazis, now it’s the United Arab Emirates.

But even as [facial recognition] technology comes under more scrutiny in the United States, tech giants such as IBM, and China’s Hikvision and Huawei, are marketing biometric surveillance systems in the UAE, where citizens have fewer options to push back. The UAE has used cellphone hacking software to spy on hundreds of dissidents, journalists, and suspected criminals, and has invested heavily in surveillance technology, according to human rights groups and international media reports.

Colorado Students Secretly Photographed for Military Research

From 2012 to 2013, students at the University of Colorado’s Colorado Springs campus were secretly photographed as part of a research project. The U.S. Navy wanted to improve its facial recognition algorithms.

To conduct the study, [professor] Boult set up a long-range surveillance camera in an office window about 150 meters away from the West Lawn of the Colorado Springs campus, a public area where passers-by would not have a reasonable expectation of privacy. The camera surreptitiously photographed people walking in the area of the West Lawn on certain days during the spring semesters of 2012 and 2013.

Facebook Facial Recognition Opt-Out Not Universal

Consumer Reports found that Facebook facial recognition doesn’t seem to be a universal setting, despite Facebook promising otherwise.

Consumer Reports examined the accounts of 31 Facebook users across the U.S. The participants let us record video as they navigated their Facebook settings under our direction. We found the Face Recognition setting missing from eight of the accounts we documented, or just over 25 percent.

I could be a smart a** and recommend deleting your Facebook account as a way to opt out, but that wouldn’t help the people still on Facebook.