New iPadOS adoption numbers from Apple have piqued Ken’s interest. TMO Managing Editor Jeff Butts joins Ken to kicks those around. Plus – Jeff dives into AI and Apple’s Neural Engine.

Machine Learning

What Is the Apple Neural Engine and What Does It Do?

You likely hear about the Neural Engine without really knowing what Apple uses it for. Let’s dig deep into this crucial technology.

A.I. Imitators and A.I. Problem Solvers - TMO Daily Observations 2023-01-11

On a day that Apple trumpets the many successes of its Services segment, ripoff ChatGPT apps are infesting Apple’s App Store. TMO Managing Editor Jeff Butts joins Ken to discuss the issue. Plus – generative A.I. isn’t just for drawing pictures and cheating on term papers anymore. Jeff looks at ways A.I. is building the cures of tomorrow.

Disappointing and Delightful Tech - TMO Daily Observations 2022-12-16

An OfCom study on smart speaker use prompts TMO Managing Editor Jeff Butts and Ken to talk tech that disappoints and tech that delights.

'TinyML' Wants to Bring Machine Learning to Microcontroller Chips

TinyML is a joint project between IBM and MIT. It’s a machine learning project capable of running and low-memory and low-power microcontrollers.

[Microcontrollers] have a small CPU, are limited to a few hundred kilobytes of low-power memory (SRAM) and a few megabytes of storage, and don’t have any networking gear. They mostly don’t have a mains electricity source and must run on cell and coin batteries for years. Therefore, fitting deep learning models on MCUs can open the way for many applications.

Leak Shows Crime Prediction Software Targets Black and Latino Neighborhoods

Here’s some news from the beginning of the month that I missed. Gizmodo and The Markup analyzed PredPol, a crime prediction software used in the U.S.

Residents of neighborhoods where PredPol suggested few patrols tended to be Whiter and more middle- to upper-income. Many of these areas went years without a single crime prediction.

By contrast, neighborhoods the software targeted for increased patrols were more likely to be home to Blacks, Latinos, and families that would qualify for the federal free and reduced lunch program.

AWS Launches No-Code ML Service Called Amazon SageMaker Canvas

Amazon SageMaker Canvas is a new machine learning service that doesn’t require any coding. It lets you build ML models and generate predictions.

SageMaker Canvas leverages the same technology as Amazon SageMaker to automatically clean and combine your data, create hundreds of models under the hood, select the best performing one, and generate new individual or batch predictions. It supports multiple problem types such as binary classification, multi-class classification, numerical regression, and time series forecasting. These problem types let you address business-critical use cases, such as fraud detection, churn reduction, and inventory optimization, without writing a single line of code.

Adobe Ramps Up AI-based Editing, Intros Web-based Photoshop for Creative Cloud

Adobe unveiled new versions of its Creative Cloud apps at Adobe MAX on Tuesday. The updated creative design apps rely more on AI and machine learning for editing, Photoshop and Illustrator for iPad get some new features, and now you can use Photoshop in a web browser.

Apple ML Study Compares Supervised Versus Self-Supervised Learning

A research team at Apple published a study in October examining supervised and self-supervised algorithms. The title is “Do Self-Supervised and Supervised Methods Learn Similar Visual Representations?” From the abstract:

We find that the methods learn similar intermediate representations through dissimilar means, and that the representations diverge rapidly in the final few layers. We investigate this divergence, finding that it is caused by these layers strongly fitting to the distinct learning objectives. We also find that SimCLR’s objective implicitly fits the supervised objective in intermediate layers, but that the reverse is not true.

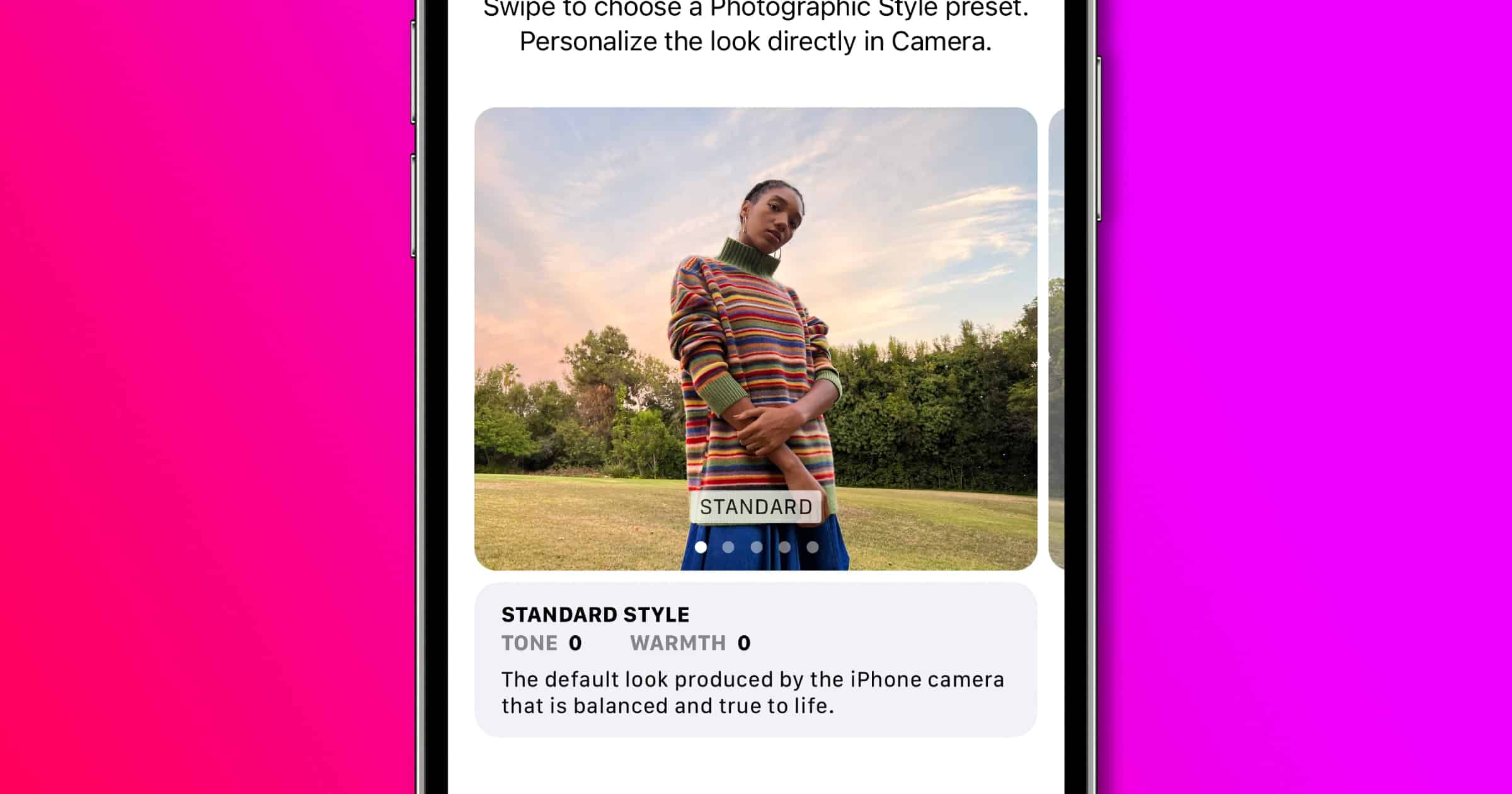

How to Use Photographic Styles With Your iPhone 13

iPhone photographers can enjoy a new feature with their iPhone 13 called Photographic Styes. It’s available with all of the models in the iPhone 13 product line.

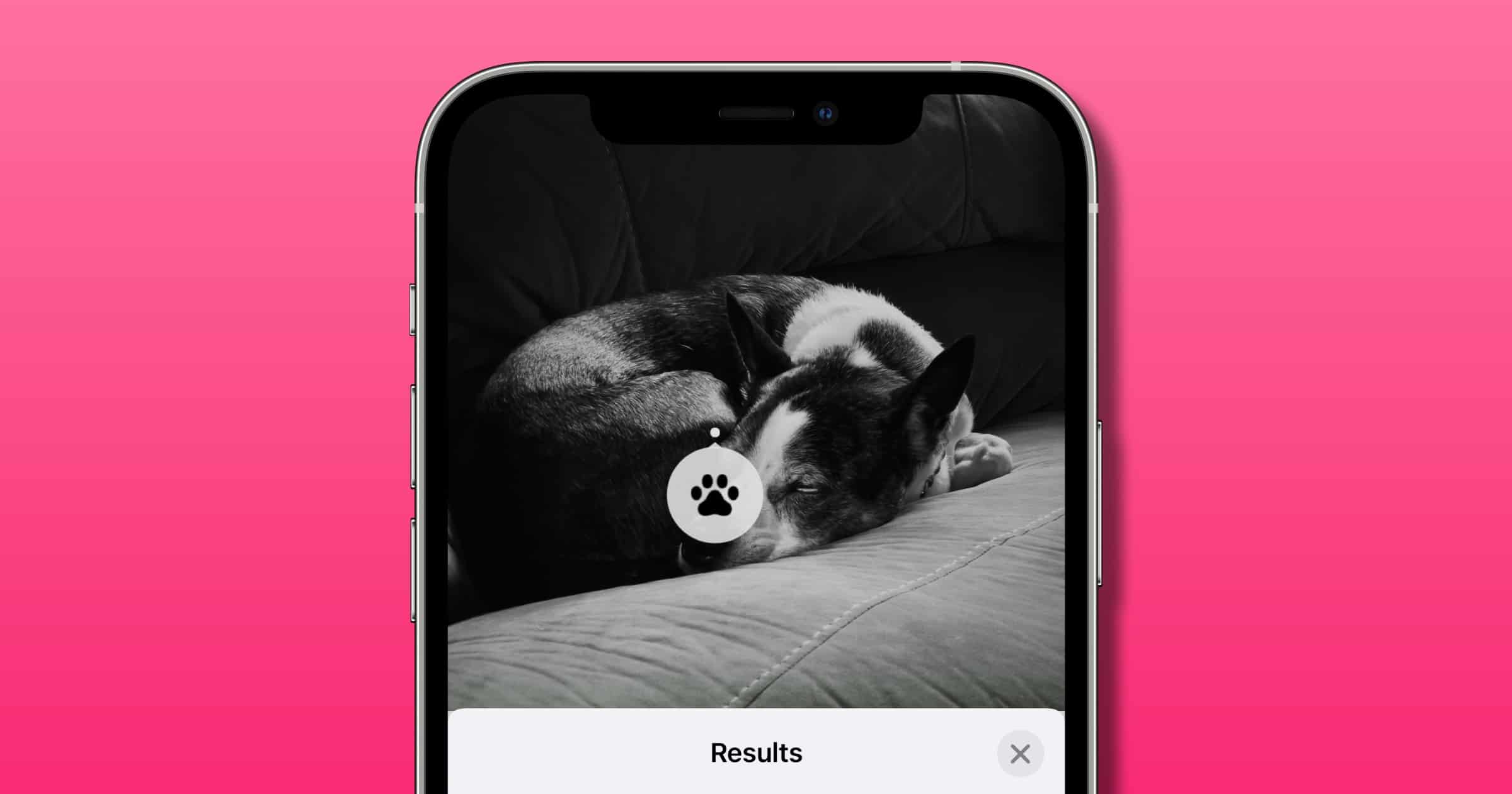

iOS 15: Apple Photos Has a New ‘Visual Search’ Feature

The Photos app is getting a new feature in iOS 15 called Visual Look Up, and it leverages the powerful on-device machine learning.

This Man Wants to Decipher the Languages of Animals

Aza Raskin was the person who invented the “infinite scroll” feature we see often on social media. Now he wants to use machine learning to decipher animal language.

A library of all the different animal communication data sets that were machine learning ready. Everyone was working in their own silos, and we saw an opportunity to create a kind of perspective-changing machine: to look at the difference between humpback communication and elephant communication and sperm whale communication and bat communication.

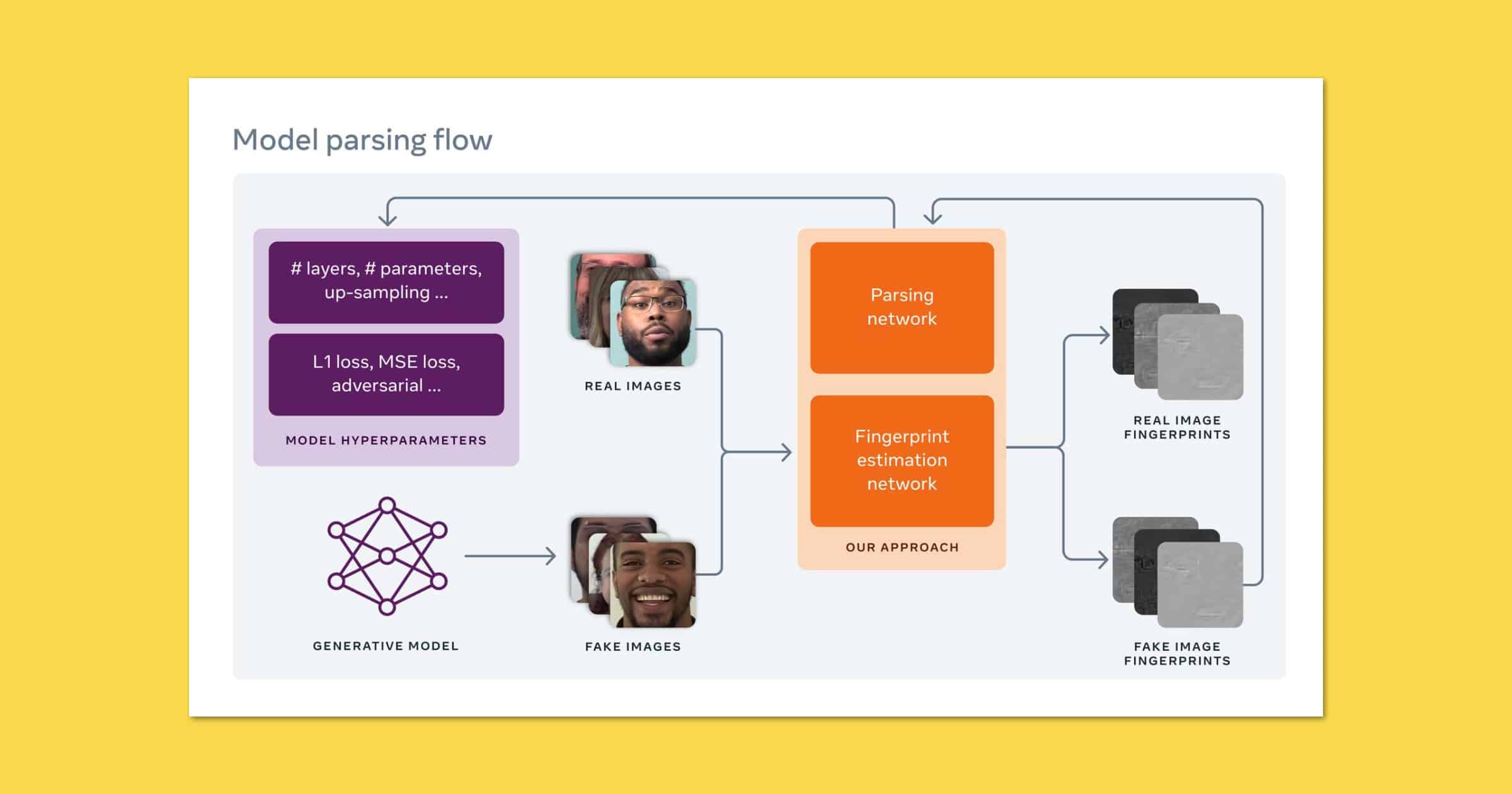

Facebook and Michigan State University Work on Deepfake Detection

Facebook and Michigan State University are working on a deepfake detection system that can reverse-engineer the fakes.

Bryan and Jeff Peek into the Future of Smarthomes and AI - ACM 546

Bryan Chaffin is joined by Jeff Gamet to speculate about the future of smarthomes and artificial intelligence, looking towards a future when these technologies work smoothly and have a real impact on how we live.

Robot Lawyer ‘DoNotPay’ Adds Tax Fraud to its Repertoire

DoNotPay is a machine learning service that provides a variety of services like canceling free trials, appealing parking tickets, and more.

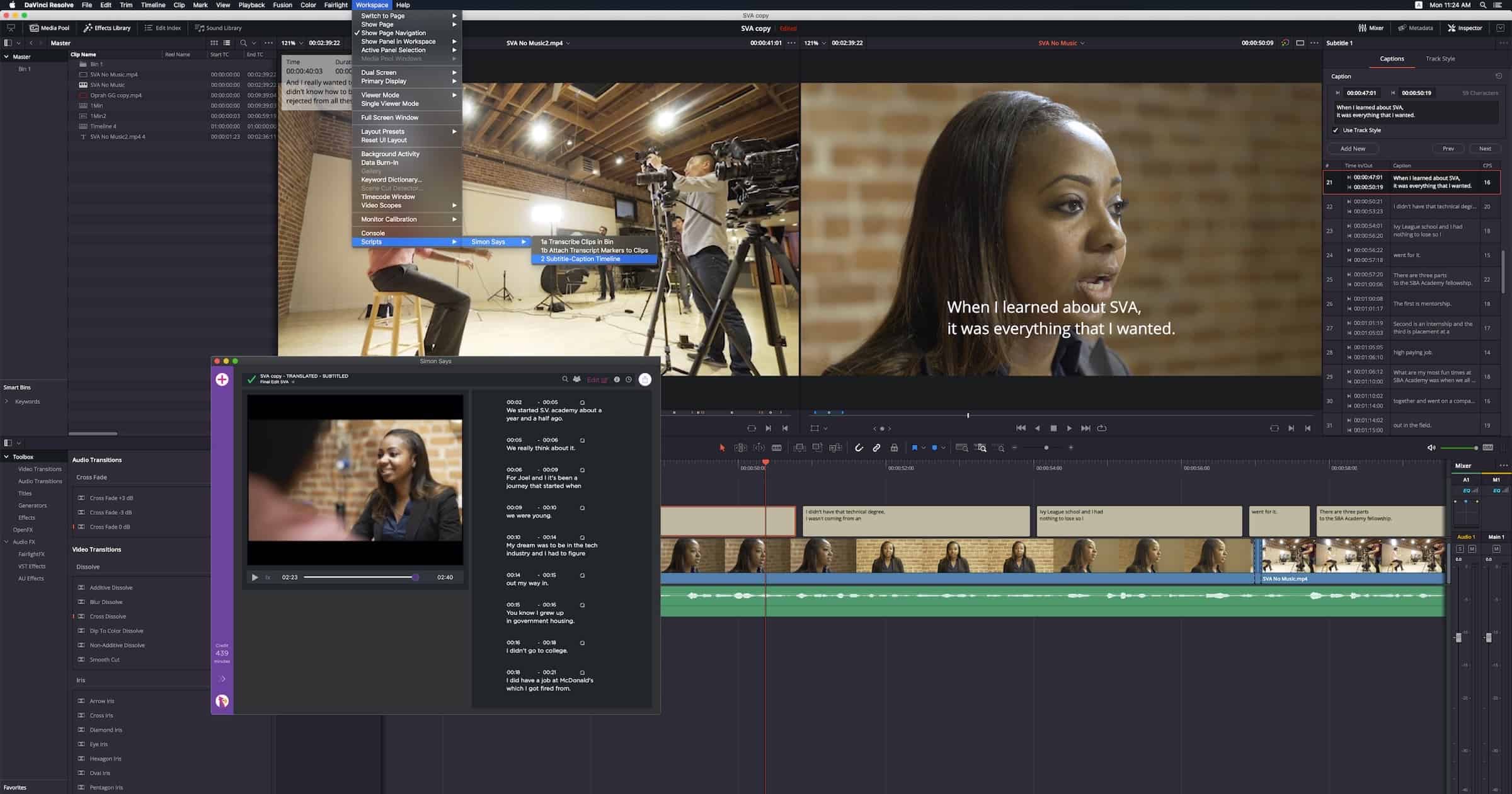

Simon Says is Bringing AI Transcription to DaVinci Resolve on Mac

Simon Says announced a partnership on Thursday to bring AI transcription to video editor DaVinci Resolve on macOS.

Delve Into the ‘AI Dungeon’ for Text-Based Adventures

AI Dungeon is a text-based adventure game where the adventures are generated on-the-fly by machine learning. This means there are a near-infinite amount of adventures you can play. It’s available on the web and as an app for Android and iOS. There are two worlds you can play in: Xaxas (shown above) and Kedar (shown below). Xaxas is a world of peace and prosperity. It is a land in which all races live together in harmony. Kedar is a world of dragons, demons, and monsters. But there are other variations of AI Dungeon, like choosing a theme, playing multiplayer, or not choosing a world.

Pixelmator Photo 1.4 Brings ML Super Resolution to iPad

The Pixelmator Photo 1.4 update brings ML Super Resolution to the iPad. This is the feature introduced on macOS that lets you upscale images using machine learning. “Today’s update also adds a very awesome comparison slider, letting you quickly compare your edited image with the original in a split-screen view. And it works all around the app, so when using the Repair tool, you can turn on and move the comparison slider to see just the changes made with that tool. When the Color Adjustments tool selected, you’ll see just the color changes, and so on. Super useful.” Finally, the company has raised the app’s price to US$7.99, up from US$4.99.

China Would Rather TikTok Be Shut Down Than Sold

A report on Friday says that China would rather TikTok be shut down instead of being sold to a U.S. company.

However, Chinese officials believe a forced sale would make both ByteDance and China appear weak in the face of pressure from Washington, the sources said, speaking on condition of anonymity given the sensitivity of the situation.

ByteDance said in a statement to Reuters that the Chinese government had never suggested to it that it should shut down TikTok in the United States or in any other markets.

Here’s what I think this means. China is all about the AI, and based on reports its algorithms seem to be more advanced than even invasive Facebook. China doesn’t want the U.S. to know just how more advanced it’s algorithms are. Read: China export ban of such technology.

Apple AI/ML Residency Program Invites Experts to Collaborate

Apple has launched an AI/ML residency program that invites experts to build machine learning and AI powered products and experiences.

Apple’s Senior VP of Machine Learning Talks Strategy

John Giannandrea, Apple’s Senior Vice President for Machine Learning and AI Strategy, and Bob Borchers, VP of Product Marketing, spoke with Ars Technica about Apple’s AI strategy and beliefs.

When I joined Apple, I was already an iPad user, and I loved the Pencil,” Giannandrea (who goes by “J.G.” to colleagues) told me. “So, I would track down the software teams and I would say, ‘Okay, where’s the machine learning team that’s working on handwriting?’ And I couldn’t find it.” It turned out the team he was looking for didn’t exist—a surprise, he said, given that machine learning is one of the best tools available for the feature today.

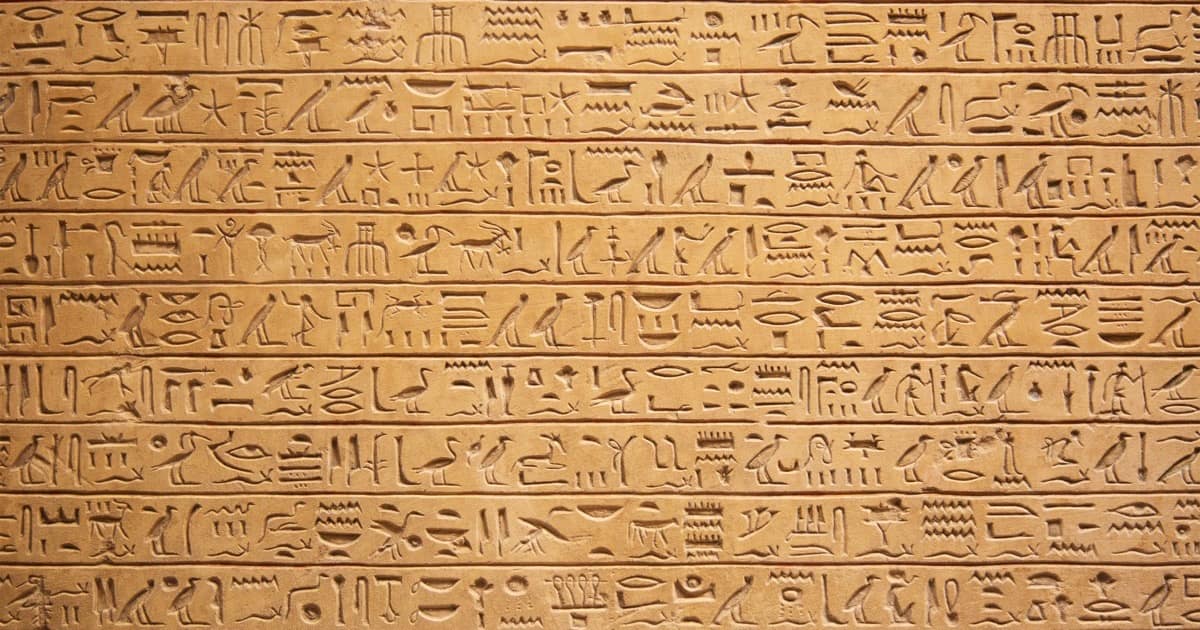

Google’s ‘Fabricius’ Tool Uses ML to Decode Hieroglyphs

Here’s something cool that Google has created: A web tool called “Fabricius” that uses machine learning to decrypt hieroglyphs.

So far, experts had to dig manually through books upon books to translate and decipher the ancient language–a process that has remained virtually unchanged for over a century. Fabricius includes the first digital tool – that is also being released as open source to support further developments in the study of ancient languages – that decodes Egyptian hieroglyphs built on machine learning.

Apple Acquires Machine Learning Startup Inductiv to Improve Data Siri Uses

Apple has acquired machine learning startup Inductiv, Inc to improve Siri, machine learning, and Apple’s data science endeavors.

How to Dig Into the Apple Photos SQLite Database

Now here’s a cool article I found last night. Simon Willison found the SQLite database that Apple Photos uses. It contains photo metadata as well as the aesthetic scoring system that the machine learning uses. Further, there are numeric categories used to label content within photos. For example, Category 2027 is for Entertainment, Trip, Travel, Museum, Beach Activity, etc. I think the quality scores are particularly interesting. There are scores for noise, composition, lively color, harmonious color, pleasant lighting/pattern/perspective, and a bunch more. I bet Apple’s acquisition of Regaind contributed to this.