Let’s walk through five of the best encryption software options for Mac, helping you protect and secure the sensitive data on your computer.

encryption

How-To Christmas and Predicting 2023 - TMO Daily Observations 2022-12-20

If you’re giving electronics this holiday season, there are some steps you might want to take ahead of time. TMO Managing Editor Jeff Butts fills us in. Plus – Wedbush analyst Daniel Ives has a few predictions for the new year. Ken and Jeff kick them around.

More End-to-End Encryption and Giving the Gift of Will Smith - TMO Daily Observations 2022-12-09

Dreamlight Valley resident and TMO Managing Editor Jeff Butts joins Ken today to discuss Apple’s Advanced Data Protection for iCloud. What is it, who needs it, and how does one activate it? Plus – Will Smith is promoting Apple TV+. Too soon?

FBI Unhappy With Apple’s End-To-End Encrypted iCloud Backup

The FBI expressed its deep concern about the implications’s of Apple’s upcoming end-to-end encrypted iCloud backup feature.

Shootings in Buffalo and Uvalde Bring Back Arguments Concerning End-to-End Encryption

The tragic attacks in Buffalo, New York and Uvalde, Texas are rekindling arguments concerning social media and end-to-end encryption.

Change Your Signal Number in Company's Latest Update

It’s now possible to change your Signal number, thanks to an update the company recently released and wrote about on Monday.

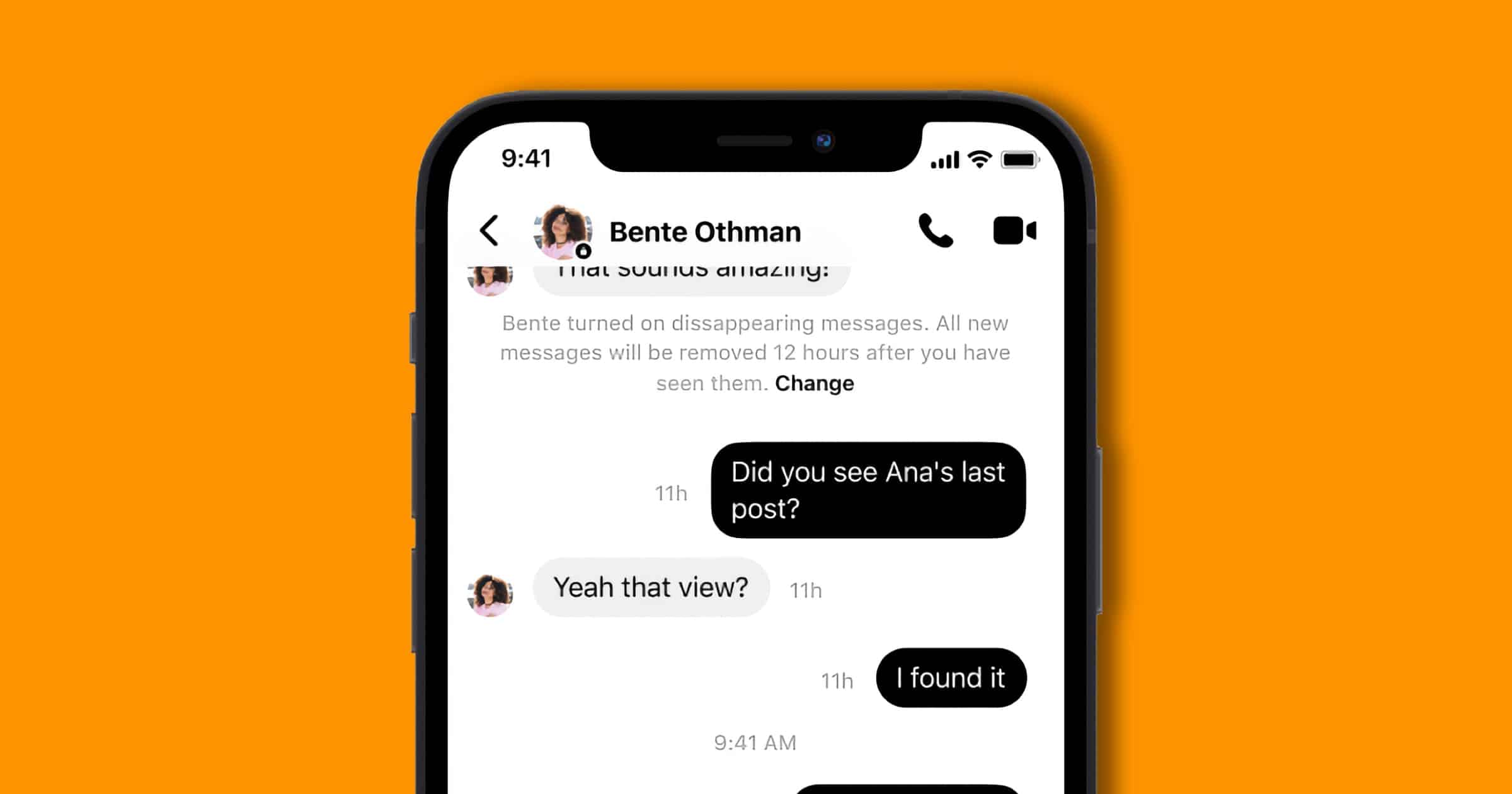

Facebook Rolls Out End-to-End Encrypted Chats for Everyone

End-to-end encrypted chats are now available for all users of Facebook Messenger, the company announced. This includes group chats and calls.

Last year, we announced that we began testing end-to-end encryption for group chats, including voice and video calls. We’re excited to announce that this feature is available to everyone. Now you can choose to connect with your friends and family in a private and secure way.

These secure chats remain opt-in only, instead of encrypted by default like actual private messaging apps.

Appeals Court Says Patent Lawsuit Related to iMessage Encryption Can Proceed

A US appeals court says a patent lawsuit against Apple can proceed. It upheld a decision that patents related to end-to-end encryption valid.

Security Friday: This Week in (Sad) Data Breaches – TMO Daily Observations 2022-01-21

Andrew Orr joins host Kelly Guimont to discuss a Safari data leak, encrypted messaging, and as always, a new data breach.

Report Reveals UK Government Push to Attack Encrypted Messengers

The UK government plans an advertising campaign to attack messaging apps that use end-to-end encryption. The details were published recently.

Proton Shares Year in Review With Product Plans for 2022

Proton, makers of ProtonMail and ProtonVPN, has shared its 2021 Year in Review. The company has also shared its plans for the year ahead.

Pepcom 2022: Wemo Announces a Smart Video Doorbell for HomeKit

Wemo’s new smart video doorbell announced at Pepcom 2022 will support Apple’s HomeKit. Videos will be stored in a person’s iCloud+ account.

Everything You Wanted to Know About How Encrypted Email Works

ProtonMail published a nice blog post explaining how encrypted email works, and the various protocols that companies use.

End-to-end encryption for messages sent between ProtonMail users is automatic, and our integrated OpenPGP support makes it easy to send and receive PGP-encrypted E2EE messages to people that use PGP with other email providers. Proton also informs you when your messages are protected by E2EE with a small blue padlock (for other ProtonMail users) or green padlock (for OpenPGP users).

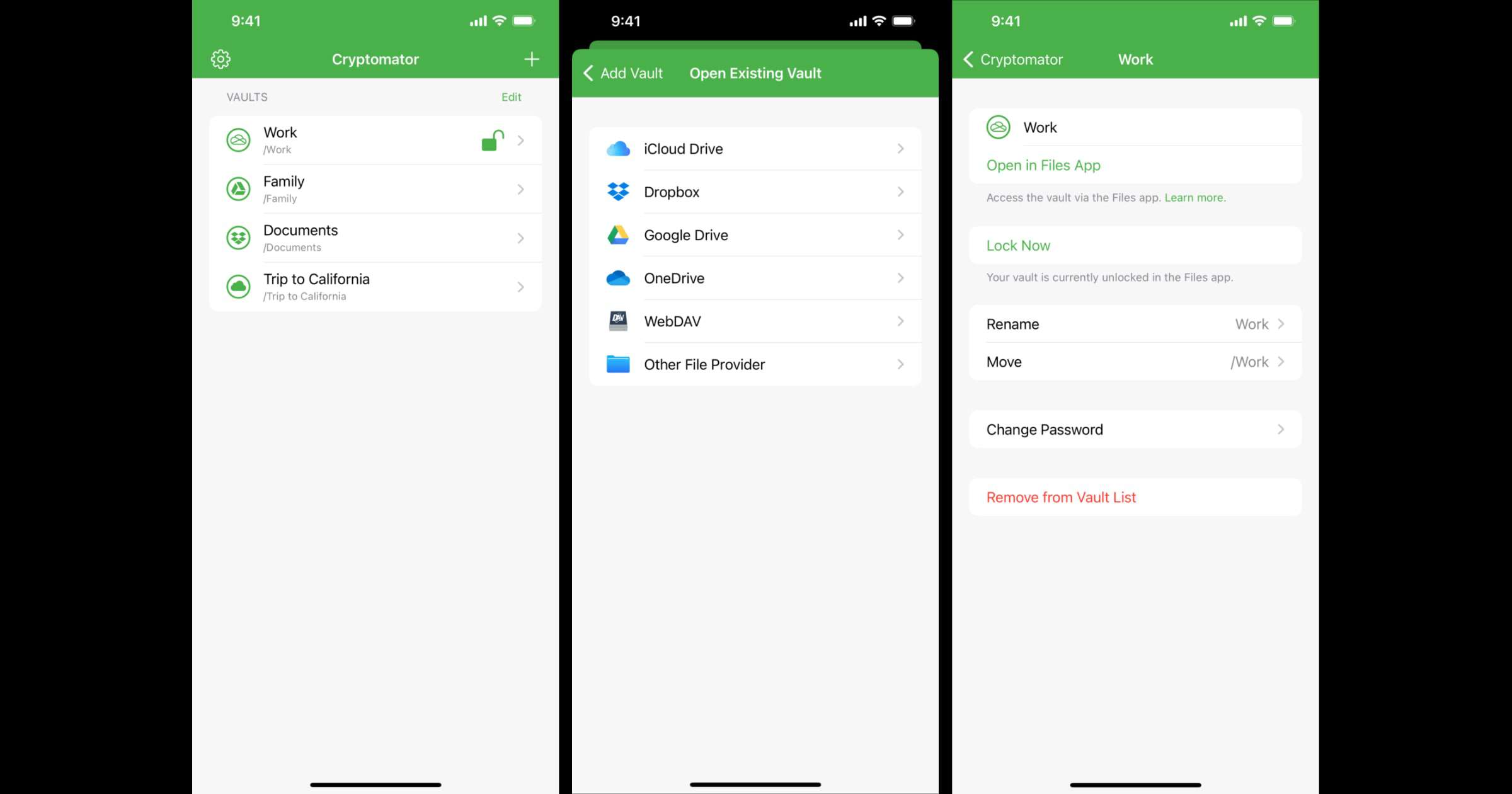

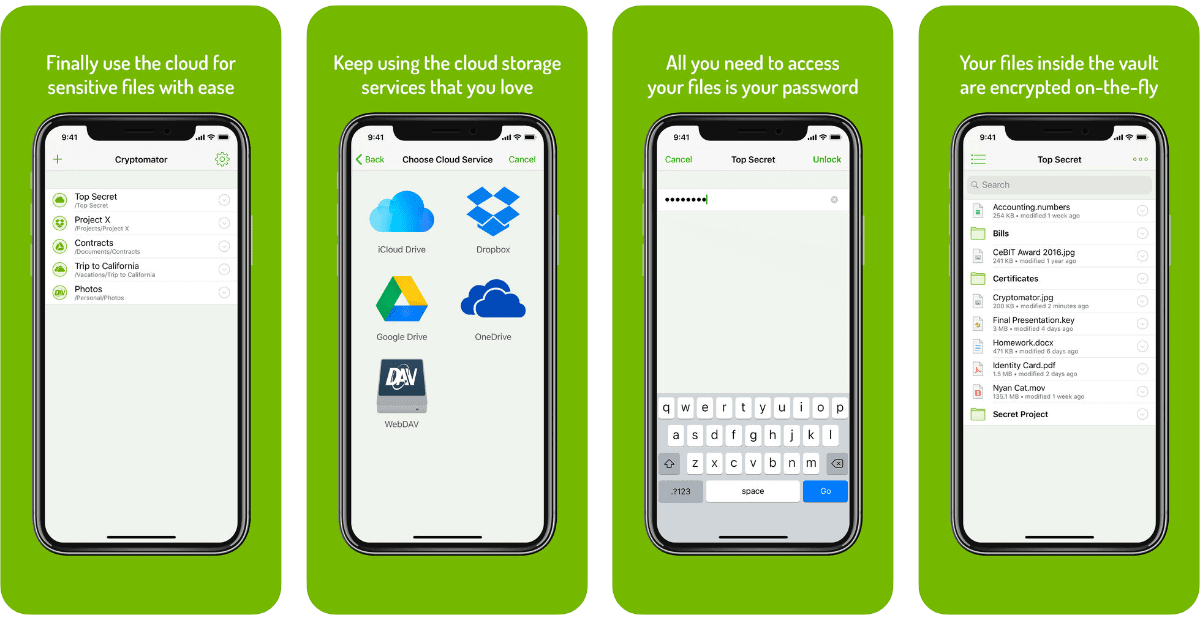

'Cryptomator' 2.0 is Here and it Integrates Into iOS Files App

The team behind Cryptomator has rewritten the app in Swift, and with version 2.0 the app is completely integrated into the Files app. This means that your vaults are directly accessible from there. For example, you can now save and edit a Word document directly in an encrypted vault via the Files app. In addition, features like thumbnails, grid view, swiping through images, and drag & drop are possible with the new app. To summarize, Cryptomator gives you end-to-end encryption for your files. You can store them in Google Drive, iCloud Drive, Dropbox, and more. You can also store them offline in the Files app or on a hard drive.

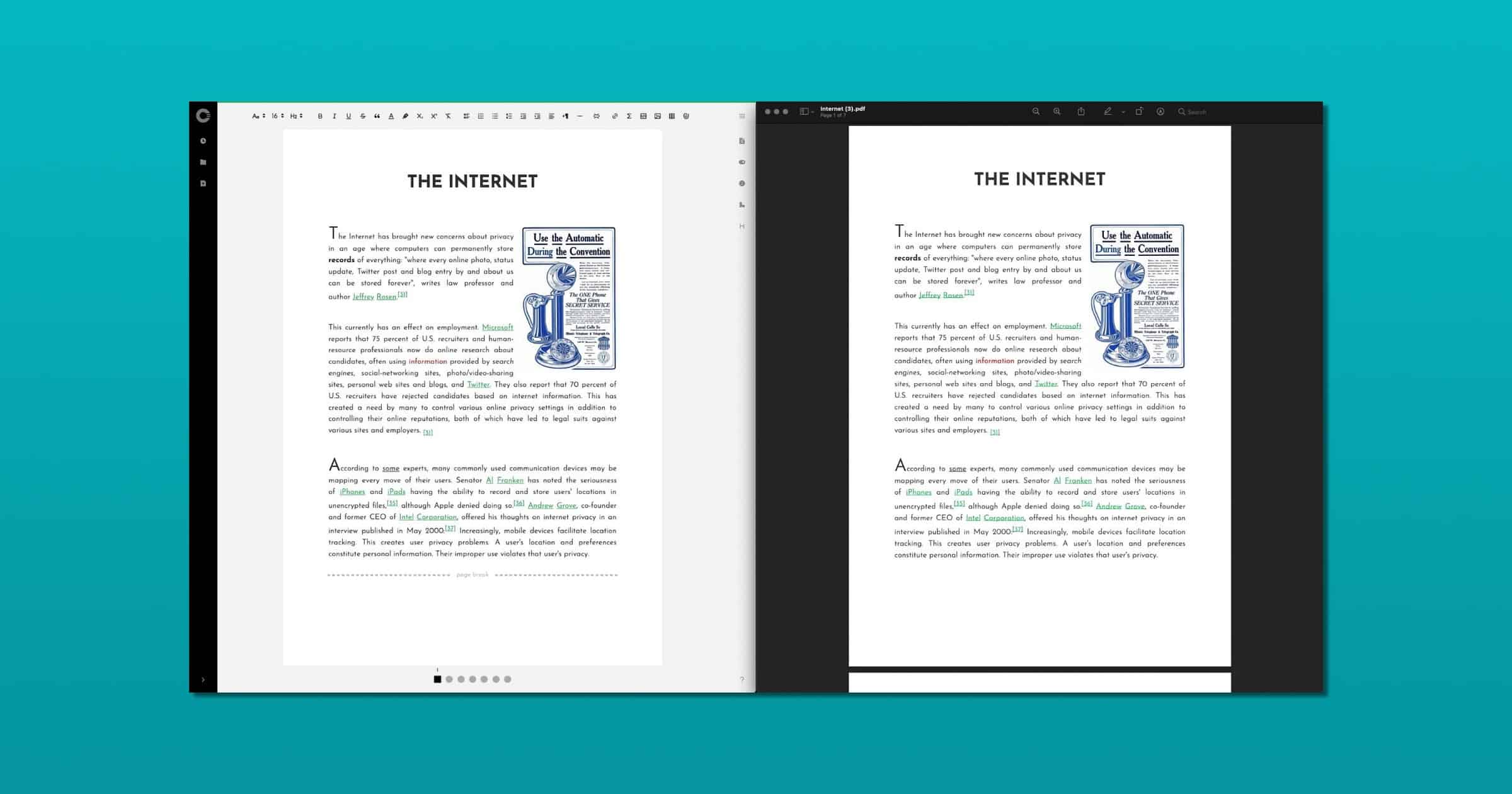

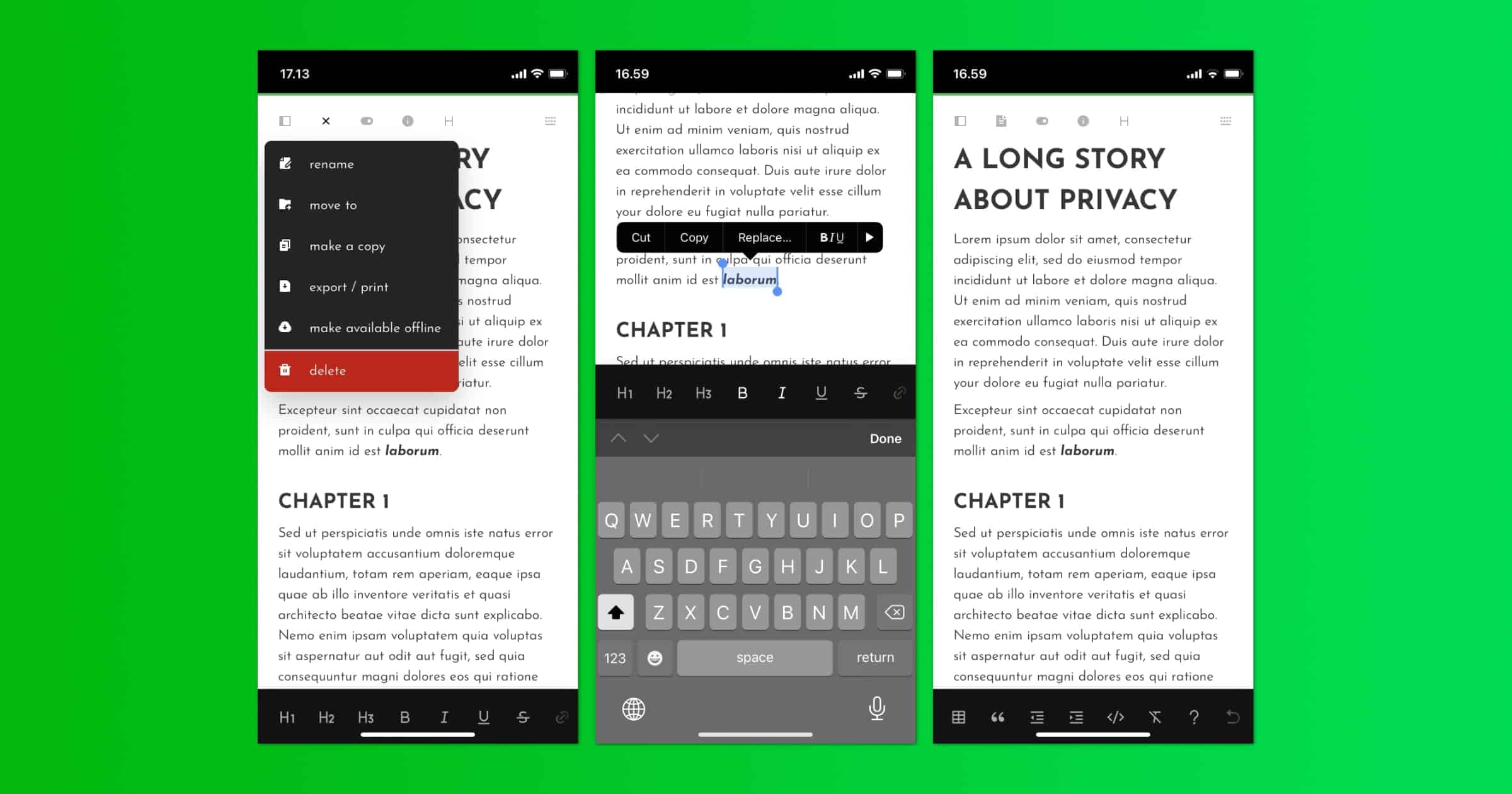

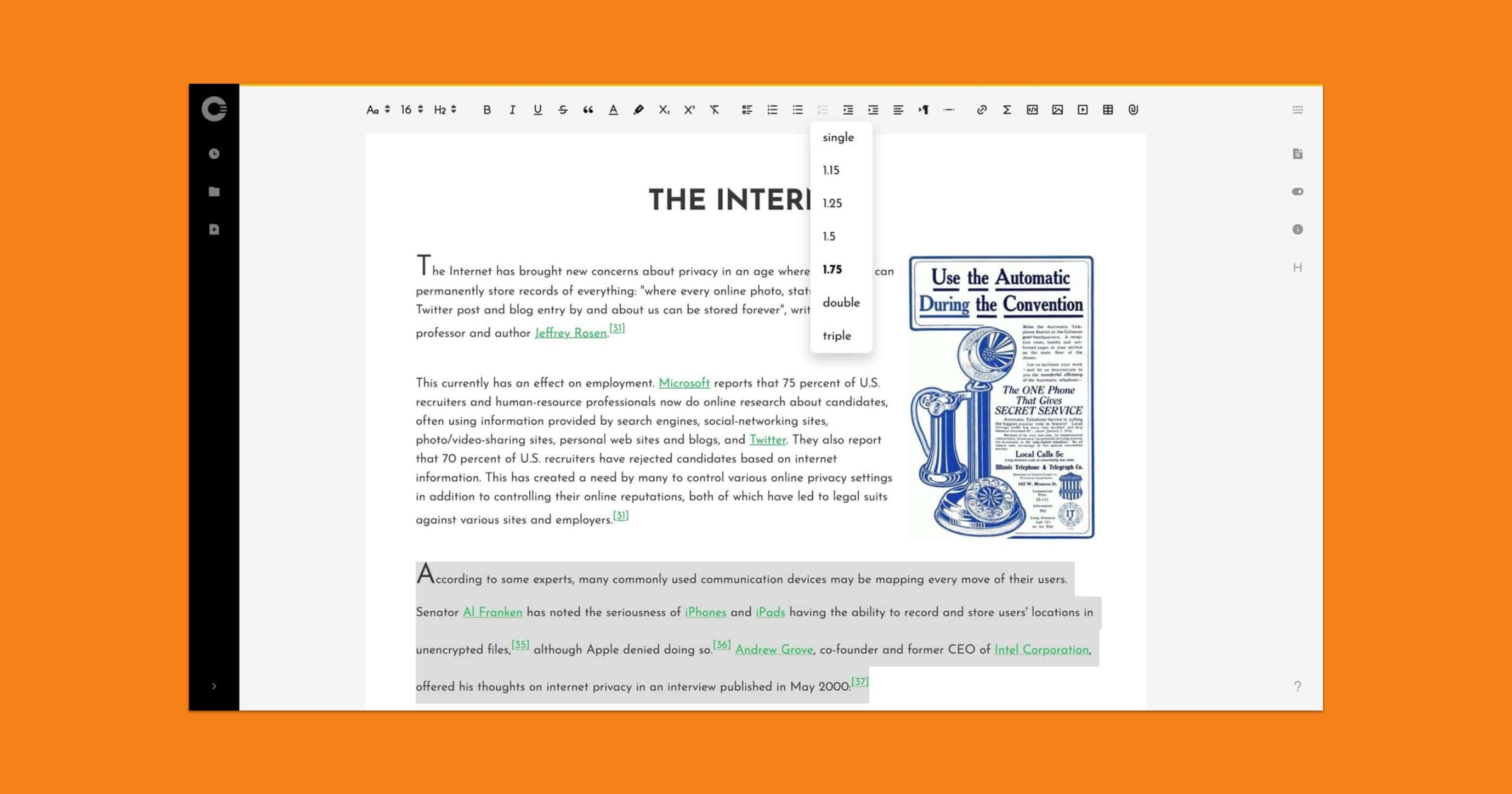

Cryptee Update Brings Encrypted PDFs and Print-Accurate Editing

An update to Cryptee, a platform for encrypted photos and documents, brings Paper Mode, a print-accurate view for your documents. It also adds editing for encrypted PDFs.

You can now work on your documents in Cryptee Docs, using a print-accurate paper view, by choosing paper sizes like A4 / A3 / US Letter / US Legal etc, just like the way you would in Microsoft Word or Google Docs.

While exporting your documents as PDF files, you can now easily set a key, and encrypt the PDFs. These encrypted PDFs can be opened using any PDF viewer, on all operating systems and PDF viewer apps.

US Government Still Wants an iOS Backdoor

Some thought efforts to force an iOS backdoor were over. In fact, Cupertino may have won the battle in 2016, but the war wages on. Jeff Butts outlines the latest stalled efforts, and how they are probably just a setback for the government.

Facebook Adds End-To-End Encryption to Messenger Calls, Instagram DMs

Facebook has begun rolling out end-to-end encryption for Messenger calls and Instagram DMs, bringing greater security and privacy to users.

4 Alternatives to iCloud Photos That Don’t Scan Your Content

Does the thought of your Apple device scanning your iCloud Photos make you uneasy? Good news! Here are four private alternatives.

Security Friday: News, Data Leaks, and Betas – TMO Daily Observations 2021-07-02

Andrew Orr joins host Kelly Guimont to discuss Security Friday news, discuss data privacy, and talk about some public betas available for testing.

Cryptomator’s Major 2.0 Upgrade is Available in TestFlight, Now Open Source

File encryption app Cryptomator is readying a major 2.0 upgrade and interested users can test the beta within Apple’s TestFlight app on iOS.

Cryptee's Cloud Document Editor Gets Mobile Interface

Cryptee announced version 3.1 to the document editor for its encrypted cloud platform. The UI has been redesigned for mobile users.

Encrypted Email Service ‘Tutanota’ Desktop Apps Exit Beta

Tutanota is an end-to-end encrypted email service and its desktop clients exit beta after two-and-a-half years.

Cryptee Updates With Line Spacing, Quick Document Access

Encrypted storage provider Crypt.ee is back with updates like remembering encryption keys, quick access to recent documents, and line spacing in documents.

We’re slowly getting ready to release our paper-mode for Cryptee Docs. It will allow you to work print-accurately on popular paper sizes like A4 / U.S. Letter etc, much like your favorite rich text editors like Microsoft Word™. But we thought perhaps we can release some of these paper-specific features ahead of time.